We Recreated Apple's '1984' Super Bowl Ad Using AI for $100. Then We Measured Both.

7 min

Attention Analytics

Attention Science

— CASE STUDY: PRE-PRODUCTION TESTING

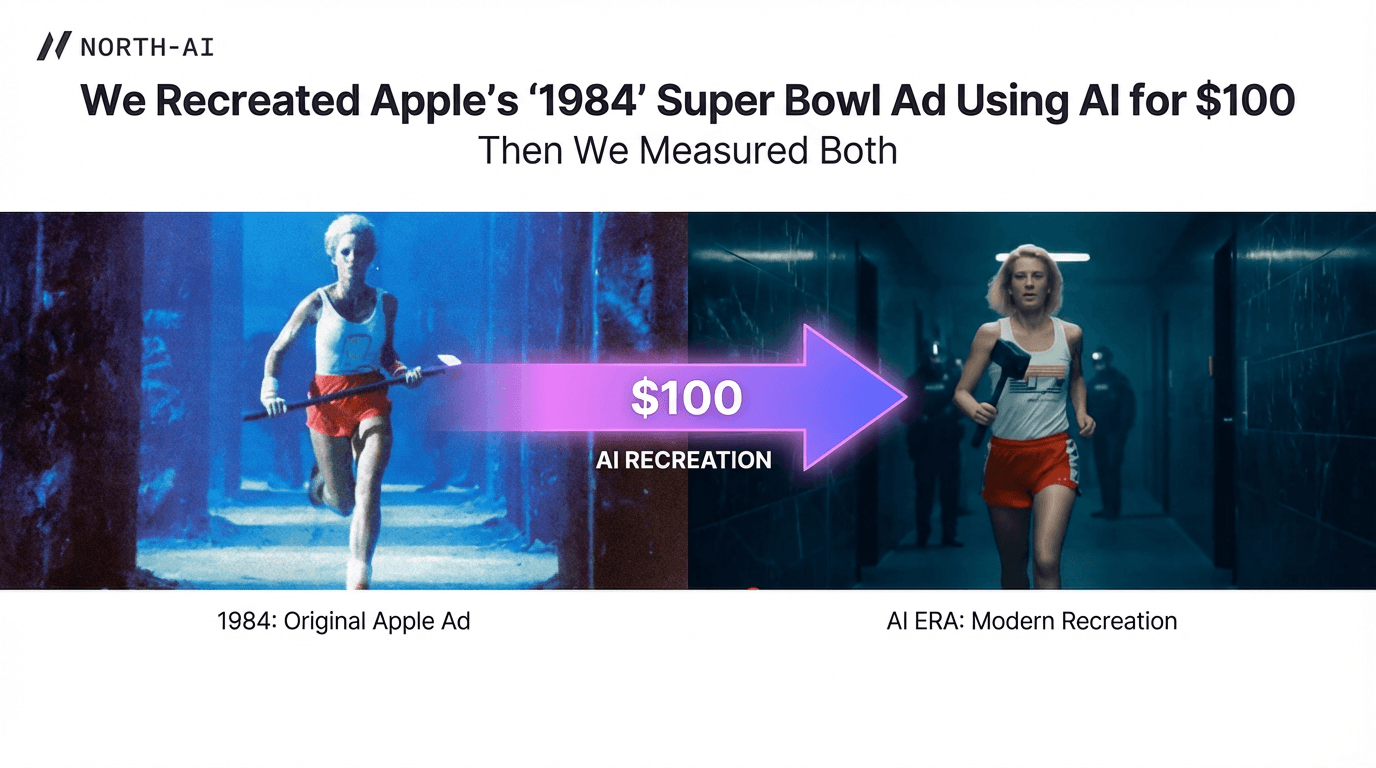

We Recreated Apple's '1984' Super Bowl Ad Using AI for $100. Then We Measured Both.

North AI's cognitive analysis platform compared an AI-generated shot-for-shot recreation of Apple's famous '1984' Super Bowl ad against the original production. Average variance across 8 cognitive and behavioural metrics: 10.11%.

10.11%

| 8

| $100

| 4 hrs

|

Background

Apple's "1984" Super Bowl ad aired once — during Super Bowl XVIII on 22 January 1984. Directed by Ridley Scott, it introduced the Macintosh computer to the world. The production budget was approximately $900,000 at the time (roughly $3 million in 2025 dollars). It is widely regarded as one of the most influential television advertisements ever made.

This study tests a specific question: can an AI-generated recreation of that ad produce the same cognitive response in viewers as the original? If so, it has a direct implication for creative testing at the ideation stage, before any production budget is committed.

The AI Recreation

The AI-generated version used in this study was produced by The Authentic AI, a private research institute focused on AI experimentation. The project was led by Eric Harvey and completed in approximately four hours.

The process involved breaking the original ad down shot by shot, generating descriptive prompts for each scene using Google Whisk, and producing video content using Google Veo 2. A custom AI avatar was created for the "Big Brother" character using Google Imagen and HeyGen, with audio isolated and cleaned via ElevenLabs. The final edit was assembled in CapCut.

The shot-by-shot AI recreation was produced by The Authentic AI. Full methodology, prompts, and process: theauthentic.ai/ourthoughts/ai-in-action-recreating-an-iconic-apple-spot-in-4-hours |

|

Fig. 1 — Shot-by-shot breakdown of the original Apple "1984" ad used to generate AI prompts for each scene. Source: The Authentic AI.

Visual Comparison: Original vs AI

The following images show corresponding frames from the original 1984 production and the AI-generated recreation. Shot composition was closely replicated. Visual quality and emotional texture differ.

|

Fig. 2 — Reference frame from the original Apple "1984" ad used as the source image for AI prompt generation via Google Whisk. Source: The Authentic AI.

|

Fig. 3 — AI-generated version of the corresponding scene, produced using Google Veo 2 after approximately 10 prompt iterations. Structural composition aligns; visual quality is lower. Source: The Authentic AI.

|

Fig. 4 — Final assembled AI-generated version as used in the North AI cognitive analysis. Produced in ~4 hours using Luma AI, Runway, Sora, and KlingAI. Source: The Authentic AI.

|

Fig. 5 — Final assembly in CapCut, showing shots sequenced to match the original runtime. This is the version tested in North AI's cognitive analysis. Source: The Authentic AI.

Methodology

North AI's cognitive analysis platform was used to measure viewer response across both versions. The platform records involuntary physical responses second by second as viewers watch content. No surveys. No self-reported data.

Five categories of measurement were captured across eight individual metrics:

1 | Blink tracking — blink rate and blink synchronisation, measured frame by frame |

2 | Gaze analysis — content gaze (what within the frame viewers focus on) and screen gaze (overall attention to the screen) |

3 | Head movement — physical head movement as a proxy for attention and alertness |

4 | Cognitive metrics — engagement, emotional response, attention degree, cognitive load, and brain load |

5 | Shot-by-shot comparison — both videos analysed at shot level, not as averages across the full runtime |

Results were compared across all eight metrics and average variance was calculated.

Results

The table below shows full results for all eight cognitive and behavioural metrics. The final row shows the average variance across all metrics.

|

|

|

|

Engagement | 51% | 33% |

|

Emotional Response | 22% | 35% |

|

Attention Degree | 34% | 8% |

|

Cognitive Load | 18% | 24% |

|

Brain Load | 2% | 13% |

|

| |||

Blinks (Rate + Synchronisation) | 6.9% | 4% |

|

Gaze (Content + Screen Gaze) | 97% | 94% |

|

Head Movements | 3% | 4% |

|

Average Variance Across All 8 Metrics | 10.11% | ||

Analysis

METRICS WHERE THE AI VERSION SCORED HIGHER

Engagement was 18 percentage points higher in the AI version (51% vs 33%). Attention degree was 26 points higher (34% vs 8%). Blink metrics were also slightly elevated.

These results do not indicate that the AI version is a more effective advertisement. Elevated engagement in a controlled test context can reflect novelty effects — viewers attending more carefully because content appears slightly unfamiliar. This does not map directly to real-world ad performance. For pre-production testing, it indicates that AI-generated content can hold viewer attention. That is a meaningful structural signal.

METRICS WHERE THE ORIGINAL SCORED HIGHER

Emotional response was 13 percentage points higher in the original production (35% vs 22%). Brain load was 11 points higher (13% vs 2%). Cognitive load was also higher in the original.

The original generated substantially more emotional activation. Direction, performances, cinematography, and score contribute to emotional depth in ways current AI video tools do not replicate. Teams using AI pre-production testing should expect the finished production to outperform on emotional metrics. This is a predictable and known limitation.

METRICS WHERE BOTH VERSIONS ALIGNED CLOSELY

Gaze was nearly identical — 97% for the AI version versus 94% for the original, a 3% variance. Head movement differed by just 1 percentage point. These are the most practically significant results for pre-production use.

"The AI version directed viewer attention to the same places. The emotional weight of those moments was different — but the visual structure worked."

Shot composition, pacing, and visual flow produced closely aligned gaze patterns across both versions. Structural creative decisions — where to place emphasis, how to pace cuts, which elements to foreground — can be tested and validated using AI-generated content before production begins.

Implications for Pre-Production Testing

The 10.11% average variance figure is the central result. Across eight separate metrics, an AI version produced in four hours for ~$100 aligned within roughly ten percentage points of a $1.6 million professional production.

The gaps on emotional response and brain load are real and should be accounted for. AI-generated content will tend to understate emotional performance of a well-produced final ad.

The structural signals — gaze, attention direction, head movement — were closely aligned. These are the signals that matter most at the ideation stage, when creative teams need to answer:

Does the visual structure direct attention correctly?

Will viewers follow the narrative?

Is pacing holding engagement across the runtime?

Are key visual moments landing where intended?

These questions cannot be answered from a brief, storyboard, or mood board. With AI-generated video and cognitive analysis, they can be answered in hours — before any production budget is committed.

Limitations

This study tests one piece of content against one reference. The 10.11% variance should not be treated as a universal benchmark.

Emotional response gap. The 13-point difference is meaningful. Pre-production testing should treat emotional metrics as directional, not absolute.

Content type specificity. The "1984" ad relies heavily on atmosphere and visual tension. Other creative formats may produce different variance profiles.

Tool development trajectory. AI video tools are advancing rapidly. The variance between AI and professional production will likely narrow over time.

Single study. Replication across multiple formats, budgets, and categories is required before broader generalisations can be made.

Conclusion

A shot-for-shot AI-generated recreation of one of the most studied advertisements in television history produced cognitive results within 10.11% of the original across eight measures.

The gaps are real. Emotional response was 13 points lower. Brain load was 11 points lower. These differences matter for final creative evaluation.

The structural signals were closely aligned. Gaze variance was 3%. Head movement variance was 1%. The AI version directed viewers through the same visual story in the same structural way.

If cognitive data on structural performance can be obtained for $100 and four hours — before committing to a six-month, $1.6 million production — the test should be run. Pre-production creative testing has historically been constrained by the absence of content to test. AI-generated video removes that constraint.

KEY FIGURES FROM THIS STUDY

→ 10.11% average variance across 8 metrics | → AI version: ~$100 and ~4 hours |

→ Original: $1.6M over 6 months | → Gaze variance: 3% — closest metric |

→ Head movement variance: 1% | → Emotional response gap: 13 points |

→ Brain load gap: 11 points |

Frequently Asked Questions

Can you predict video ad performance before production begins?

Yes, with caveats. This study showed an AI-generated recreation of Apple's "1984" ad produced cognitive results within 10.11% of the original across eight metrics. Structural signals — gaze, attention direction, head movement — aligned most closely. Emotional response showed the widest gap (13 points lower in the AI version). Pre-production testing provides reliable data on structural performance and directional data on emotional metrics.

How close is AI-generated video to professional production in cognitive testing?

In this study, the average variance across eight cognitive and behavioural metrics was 10.11%. Gaze metrics differed by 3%. Head movement differed by 1%. Emotional response showed the largest gap at 13 percentage points. AI-generated content performs closely on structural attention metrics and less closely on emotional activation metrics.

What does North AI measure in its cognitive analysis?

North AI measures eight metrics across five categories: engagement, emotional response, attention degree, cognitive load, and brain load (cognitive metrics); blink rate and blink synchronisation (blink tracking); content gaze and screen gaze (gaze analysis); and head movement. Data is captured second by second. No surveys or self-reported responses are used.

How long does it take to recreate an ad using AI video tools?

The Authentic AI produced a shot-for-shot recreation of Apple's 60-second "1984" ad in approximately four hours using Google Veo 2, CapCut, ElevenLabs, HeyGen, and Google Imagen, at a cost of approximately $100. Full methodology is documented at theauthentic.ai — AI in Action: Recreating Apple's Iconic "1984" Ad in 4 Hours.

Does production budget affect cognitive response?

In this study, the $1.6 million original outperformed on emotional response (+13 points) and brain load (+11 points). On gaze and head movement, both versions produced near-identical results. Production quality has a measurable impact on emotional activation. Structural attention metrics can be tested effectively using AI-generated content regardless of production budget.

North AI · north-ai.com · hello@north-ai.com

North AI uses simulated cognitive testing to predict how video content will perform before production or media spend. Patent-pending technology. £1.3M government-funded. 3+ years R&D. AI recreation produced by The Authentic AI. Original Apple "1984" ad directed by Ridley Scott, produced by Chiat/Day.

Rishi Kapoor

Read More Articles