Why Your Marketing Team Is Flying Blind

7 min

Pre-Launch Testing

Video Marketing

Market Landscape

The $50 Billion Guessing Game

Every year, brands spend over $190 billion on video advertising globally. By 2028, that number will reach $241 billion. Yet most of this money is spent on creative that was never properly validated before launch.

Here's the dirty secret of modern marketing: when it comes to predicting video performance, the industry is essentially gambling. Ninety-five percent of marketers say video is crucial to their strategy, but only a fraction test their creative with the rigor they apply to media buying.

The result? Eighty-five percent of video campaigns fail to hold attention past 5 seconds. Not because marketers are incompetent—because the testing infrastructure doesn't exist.

The Two Bad Options

Marketing teams face a painful choice when launching video creative:

Option 1: Pre-launch testing (slow and expensive)

Traditional market research—focus groups, survey panels, lab-based eye-tracking—costs $20,000 to $50,000 per study. Turnaround takes 2-4 weeks minimum. For fast-moving brands running multiple campaigns, this timeline is unworkable.

The output is often questionable anyway. Focus groups capture conscious opinions, not subconscious attention responses. Surveys suffer from social desirability bias. Participants tell you what they think you want to hear.

Option 2: Launch blind and hope

Skip testing entirely. Publish creative. Wait 4-6 weeks for performance data to accumulate. Analyze. Optimize. Repeat.

The problem? By the time you have data, you've already spent your media budget. If the creative underperformed, that spend is gone. You're optimizing after the waste has occurred.

Neither option is acceptable. Yet these are the only options most teams have.

What's Actually Happening in the First 5 Seconds

To understand why traditional testing fails, we need to understand what the brain does when encountering video content.

Neuroscience research shows that initial emotional response happens in milliseconds—faster than conscious thought. This is evolutionary hardware: our ancestors survived by rapidly assessing new stimuli. Today, that same mechanism decides whether your ad gets attention or gets scrolled past.

After the initial emotional hook, viewers begin processing deeper meaning. Social cognition kicks in as they evaluate the message, characters, and narrative. Executive function helps them assess relevance, credibility, and value.

The critical insight: these processes unfold sequentially. Miss the emotional hook in the first 3-5 seconds, and deeper processing never begins. Your carefully crafted brand message never gets evaluated because the brain already decided to disengage.

Focus groups and surveys can't capture this. They measure reflective evaluation—what happens after the brain has already processed the content consciously. They miss the unconscious gatekeeping that determines whether conscious processing even occurs.

The Metrics That Actually Predict Performance

If traditional testing measures the wrong thing, what should we measure?

The answer comes from combining neuroscience with modern technology:

Gaze synchronization: When multiple viewers look at the same visual elements simultaneously, it indicates shared attention and narrative immersion. Low synchronization suggests the visual storytelling isn't guiding attention effectively.

Blink rate: This correlates with cognitive load and engagement depth. Suppressed blinking indicates peak cognitive engagement—moments where the brain is fully absorbed. Elevated blinking suggests disengagement or confusion.

Emotional activation: Combining facial micro-expression analysis with physiological signals reveals the strength and valence of emotional response. Not just "did they feel something?" but "how intensely, and in what direction?"

Cognitive load index: Derived from eye movement patterns, this metric shows where viewers are working too hard to understand content. High cognitive load often predicts drop-off points.

Content-gaze correlation: AI analysis of video frames combined with viewer attention data reveals which visual elements actually capture focus. Often, the element brands think is most important isn't where viewers look.

These metrics don't replace creative judgment. They inform it with data that was previously invisible.

The Rise of Remote Neuroscience Testing

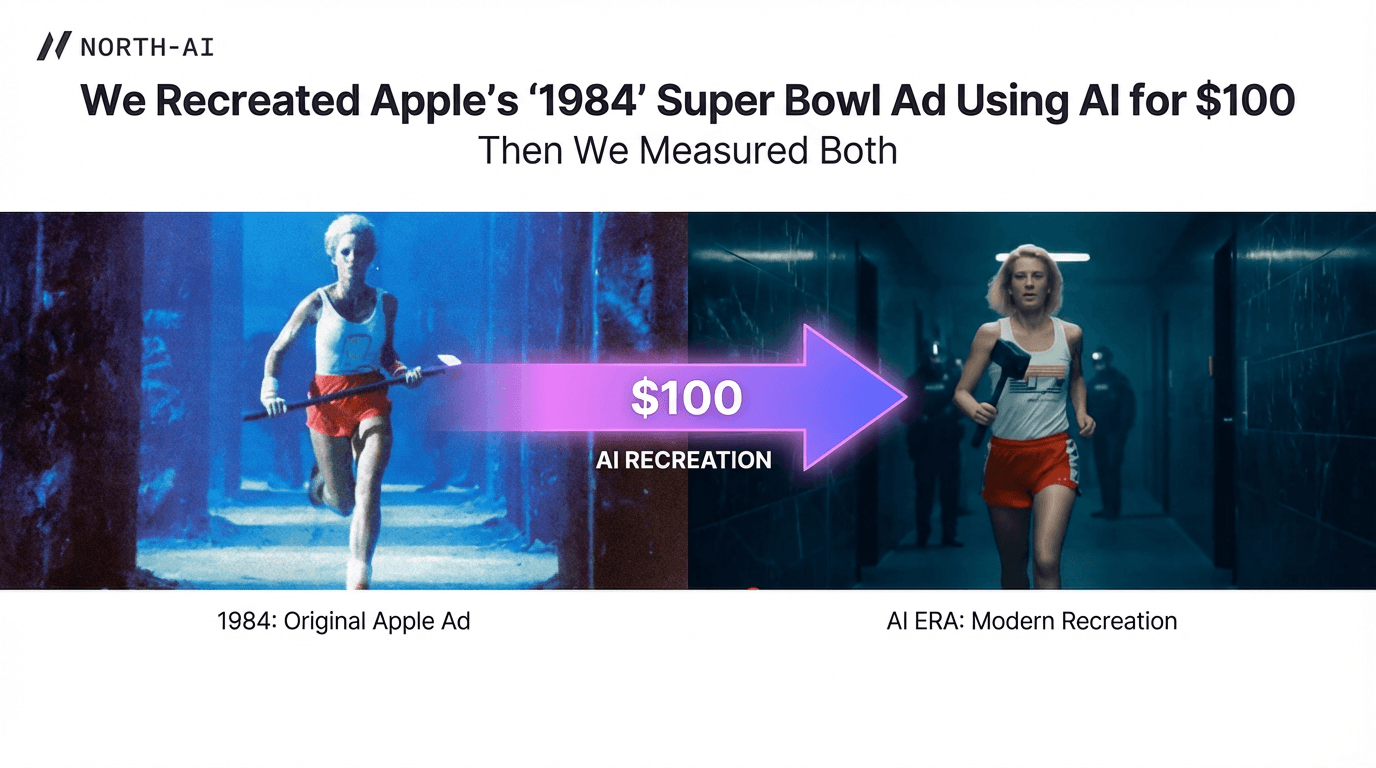

The breakthrough isn't new science—it's new accessibility.

Eye-tracking and facial expression analysis no longer require expensive hardware or laboratory settings. Standard webcams, combined with sophisticated computer vision algorithms, now capture the same signals that used to require $20,000 specialized equipment.

This shift changes everything. What cost $50,000 in a lab can now be done for a fraction of that cost, at 10x the speed, with participants in their natural viewing environments.

The implications are significant:

Geographic constraints disappear. Test any target demographic anywhere globally. No screening rooms required.

Sample bias reduces. Viewers in natural home environments behave differently than viewers in artificial lab settings.

Speed increases dramatically. Results in under 12 hours versus 2-4 weeks.

Iteration becomes possible. At lower cost and faster turnaround, teams can test multiple creative variants—not just the one they hope will work.

Forty-five percent of Fortune 500 companies now experiment with neuromarketing. This isn't fringe technology anymore. It's becoming standard practice for brands that take creative performance seriously.

What Pre-Launch Testing Actually Reveals

When brands test creative before launch, they discover patterns invisible to traditional methods:

Attention cliffs: Specific moments where viewer attention drops sharply. Often these occur at transitions, logo reveals, or pacing changes that felt smooth in the edit suite but create cognitive friction for viewers.

Emotional misalignment: Scenes intended to create excitement that actually generate confusion. Moments meant to be tender that read as slow. The gap between creative intent and viewer response.

Demographic variance: How different audience segments respond differently to the same content. The version that works for 25-34 year-olds may fail with 45-54 year-olds—insights that surface before any media spend.

Competitive context: How your creative performs relative to competitors using the same methodology. Not just "is this good?" but "is this better than alternatives in market?"

This intelligence transforms creative development from art alone to art informed by science.

The ROI of Not Guessing

The economics of pre-launch testing are compelling when you consider the alternative.

If 85% of video campaigns fail in the first 5 seconds, and you're spending $500,000 on media, you're risking $425,000 on untested creative. The cost of pre-launch neuroscience testing is typically 1-5% of that media spend.

Put differently: you wouldn't launch a $500,000 product without quality testing. Why launch a $500,000 campaign without creative testing?

The data supports this logic. Brands using neuroscience-informed creative testing report 12% higher ad recall, 4.7% brand lift increases, and significant reductions in wasted media spend.

AI prediction models now achieve 90% accuracy in predicting winning creative—compared to 52% accuracy for human marketers working from intuition alone. The gap is substantial enough to warrant investment.

Building Testing Into Your Workflow

Integrating pre-launch testing requires shifting when creative decisions get made:

Test earlier. Don't wait for final cuts. Test rough concepts, storyboards, and early edits. The earlier you identify issues, the cheaper they are to fix.

Test more variants. With faster, cheaper testing, you can evaluate multiple creative directions rather than betting on one.

Create feedback loops. Connect pre-launch predictions with post-launch performance data. Calibrate your testing methodology against real outcomes.

Separate hook testing from full-creative testing. The first 5 seconds deserve dedicated evaluation given their disproportionate impact on performance.

The goal isn't to replace creative judgment—it's to inform it. The best creative teams use neuroscience data as input, not as dictation.

The Bottom Line

The gap between pre-launch testing capability and marketing needs has closed. What was slow is now fast. What was expensive is now accessible. What required labs now works via webcam.

The brands still launching video without neuroscience validation aren't saving money—they're betting money. And the odds aren't in their favor.

The question isn't whether pre-launch testing works. The research is clear. The question is whether your organization will adopt it before competitors do.

Flying blind was acceptable when no one had instruments. That era is ending.

North AI delivers comprehensive pre-launch video testing with attention heatmaps, cognitive load analysis, and optimization recommendations—in under 12 hours. Visit north-ai.com.

Tags: #CreativeTesting #VideoMarketing #PreLaunch #MarketingRO

Lucas Cazelli

CPO at North AI

Lucas Cazelli is Head of Product at North AI, leading product strategy and validation for the company’s AI-powered video intelligence platform. His background in computational simulation and risk assessment across large datasets in R&D product development shapes North AI’s predictive modelling, turning raw attention data into reliable performance forecasts. He is based in London, UK.

Read More Articles