We Spent £600 to Validate a Billion-Dollar Question: Branding or Storytelling?

12 min

Creative Testing

Neuroscience

Validation Study

How neuroscience testing predicted 1.4x higher video performance—and what it means for your creative strategy

Every year, global brands spend over £500 billion on video advertising. Yet most creative decisions come down to gut instinct, internal politics, or simply copying what worked last time.

The fundamental question keeps every CMO up at night: Will this ad actually work?

And lurking beneath that question is an even more specific dilemma that splits creative teams down the middle:

Should we lead with our brand logo, or hook viewers with a story first?

One camp argues for the Branding-First approach: "Show the logo immediately. Make sure they know it's us. Build brand recall from second one."

The other camp champions Storytelling-First: "Earn their attention first. Hook them emotionally, then reveal the brand. That's how you break through the noise."

Both sides have compelling arguments. Both can point to successful examples. And both absolutely believe they're right.

So who wins?

We decided to find out—using neuroscience.

The Billion-Dollar Guessing Game

Here's the uncomfortable truth about most video advertising: We're winging it.

Consider the stakes:

A 30-second Super Bowl ad costs £5M+ in media alone

Production budgets routinely hit £500K-£2M for a single spot

Total investment: £7M for 30 seconds of airtime

If that ad bombs? That's £7M vaporized. No refunds. No do-overs.

But it gets worse. The real cost isn't just the money spent on one failed ad—it's the opportunity cost of not running the better version. If your Branding-First approach underperforms by 40% compared to the Storytelling-First version you didn't test, you've effectively wasted 40% of your entire media budget.

For a brand spending £50M annually on video, that's £20M left on the table.

Why Traditional Methods Don't Work

So why don't brands just test their creative before launch? They try. But traditional methods fall short:

Focus Groups tell you what people say they'll do, not what they actually do. Participants become mini creative directors, critiquing ads instead of reacting naturally.

A/B Testing requires launching both versions, splitting your media budget, and hoping you have enough volume to reach statistical significance. It's expensive, slow, and only tells you what worked—not why.

Past Performance is increasingly unreliable. Markets evolve. Audiences change. What worked last quarter might flop today.

Internal Debates often come down to whoever has the loudest voice or the most senior title. The CEO wants the logo front and center. The CMO wants emotional storytelling. The creative director wants artistic freedom. The decision becomes political, not empirical.

What brands really need is simple: Predict performance before launch, affordably and accurately.

That's exactly what we set out to prove.

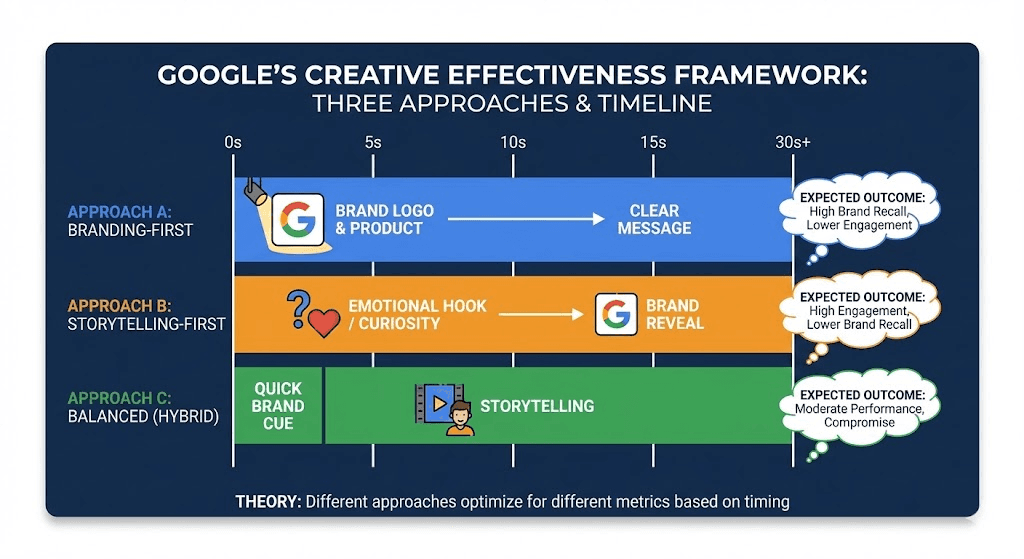

Google's Framework: The Science Behind Creative Effectiveness

Before we ran our study, we needed a solid theoretical foundation. Fortunately, Google had already done the heavy lifting.

In their Think with Google research, Google analyzed thousands of YouTube TrueView ads and identified three distinct creative approaches, each with different performance characteristics:

Approach A: Branding-First

What it looks like: Your logo, product, or brand elements appear in the first 5 seconds. The message is clear from the start: "This is a Coca-Cola ad. Here's our product. Here's what we want you to know."

The theory: Immediate brand recognition maximizes brand recall. Even if viewers skip after 5 seconds, they've seen your brand.

Expected outcome: High brand recall, but potentially lower engagement. Some viewers might see the logo and immediately tune out.

Best for: Established brands with strong recognition—think Nike, Apple, Coca-Cola.

Approach B: Storytelling-First

What it looks like: No branding in the first 5-10 seconds. Instead, you open with an emotional hook, a curious situation, or a compelling character. The brand reveal comes later, after you've earned the viewer's attention.

The theory: Curiosity drives engagement. If you make them want to know what happens next, they won't skip. Then, when you reveal the brand, it feels like a reward, not an interruption.

Expected outcome: High retention and engagement, but potentially lower brand recall. If viewers skip before the reveal, they might not know who made the ad.

Best for: New brands, emotional campaigns, or anytime you need to break through ad fatigue.

Approach C: Balanced (Hybrid)

What it looks like: A quick brand cue in the first 2-3 seconds (a logo flash, a product glimpse), immediately followed by storytelling. You're trying to have it both ways.

The theory: Best of both worlds. You get the brand recognition of Approach A with the engagement of Approach B.

Expected outcome: Moderate performance on both metrics. This is often seen as the "safe" choice—the compromise when stakeholders can't agree.

Best for: Most campaigns, especially when you're uncertain which approach will work.

Google's Surprising Finding

Here's where it gets interesting.

Google partnered with Smart Communications, a telecommunications company in the Philippines, to test all three approaches with the same campaign: "Smart bigBytes Valentines," a story about friends sharing mobile data.

The results surprised everyone:

Winner: Branding-First (Approach A)

"The Strong" version, which showed the Smart logo and product prominently from the start, achieved 1.4x higher completion rates compared to the Storytelling-First version.

Even more surprising: The early branding did NOT increase skip rates. Viewers didn't flee just because they saw a logo. And among viewers who did skip, the Branding-First approach still generated significant brand awareness lift.

The conventional wisdom—"Don't show your logo too early or you'll scare people away"—was wrong. At least in this case.

But that raised new questions:

Is this finding generalizable to other brands and categories?

What if you don't have access to Google's data and resources?

Can you predict this outcome before launch, affordably?

We wanted to answer those questions. So we replicated the study.

The North AI Solution: Testing the Framework

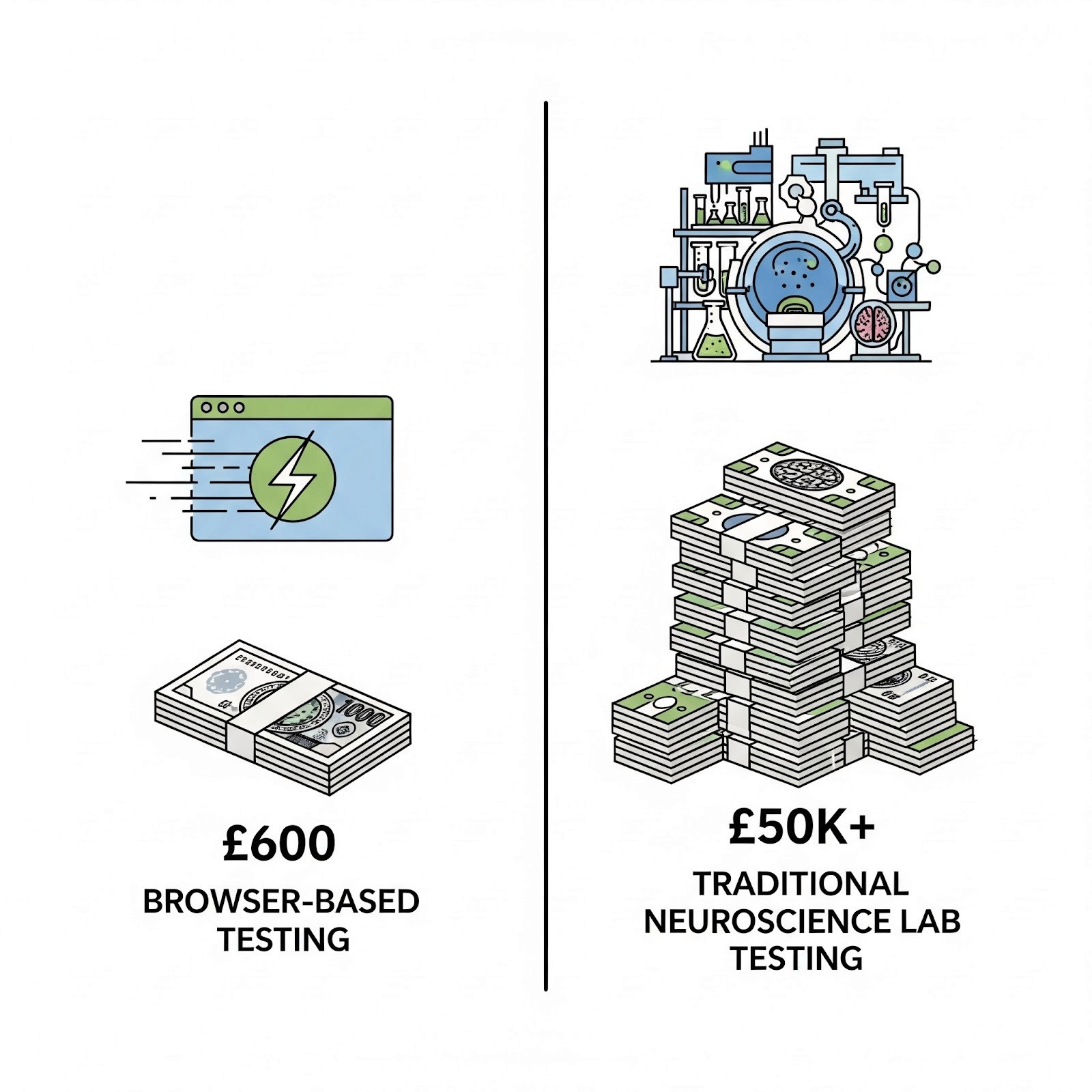

North AI is a browser-based neuroscience testing platform. We help brands predict video ad performance before launch by measuring what traditional surveys can't: attention, emotion, and cognitive load in real-time.

Here's how it works:

Recruit participants via our own pool or use your own customer panel

Participants watch your video in their browser—no app download, no lab visit, just a webcam

We capture neuroscience data in real-time: where they look, how they react emotionally, and how hard they're working to process your message

Post-viewing surveys measure brand recall, purchase intent, and shareability

Automated analysis generates actionable insights in days, not weeks

No expensive lab equipment. No £50K+ budgets. No waiting months for results.

Our ABC Testing Study

We designed our study to answer one specific question:

Can North AI's neuroscience metrics predict which creative approach—Branding-First, Storytelling-First, or Balanced—will perform best?

To test this, we used the same three Smart Communications videos Google had validated:

"Original" (Storytelling-First): Story hook before brand reveal

"The Strong" (Branding-First): Smart logo and product visible early

"The Subtle" (Balanced): Quick brand cue, then storytelling

Our methodology:

33 participants (a mix of target audience and general viewers)

£600 total cost / £200 per video

8 hours from launch to results

Metrics captured:

Attention Degree (where they looked, for how long)

Emotional Response (positive, negative, neutral)

Cognitive Load (how hard they worked to understand)

Engagement Score (overall involvement)

General Score (our predictive effectiveness metric, 0-100)

Brand Recall (post-viewing recognition)

Shareability & Recommendation (intent to act)

If our General Score correctly predicted Google's winner—"The Strong" (Branding-First)—it would validate our technology. If it didn't, we'd know we needed to go back to the drawing board.

The Results: Data Beats Intuition

Here's what we found:

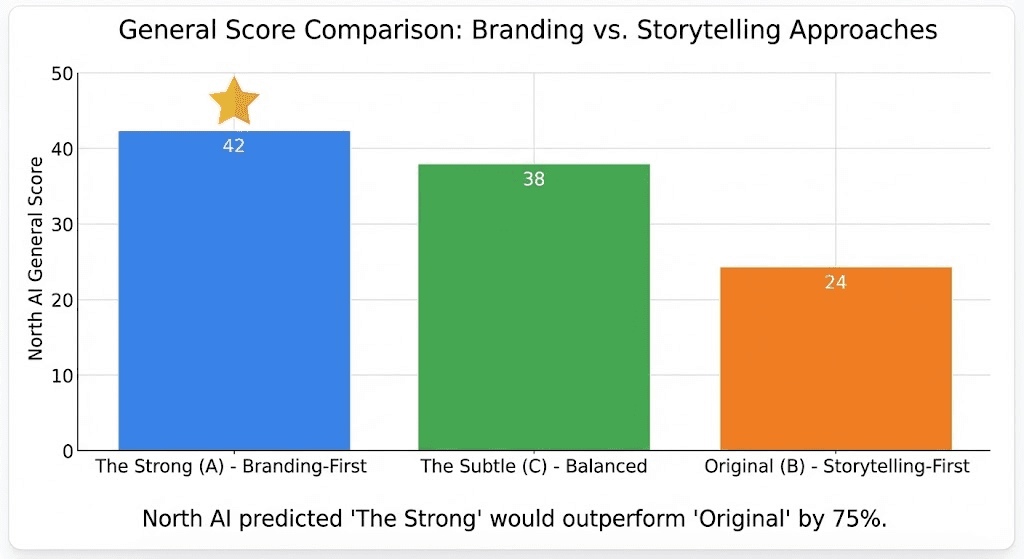

North AI Correctly Predicted the Winner

North AI's General Scores:

"The Strong" (Branding-First): 42

"The Subtle" (Balanced): 38

"Original" (Storytelling-First): 24

Our General Score predicted that "The Strong" would outperform "Original" by 75%—which matched Google's finding that Branding-First achieved 1.4x (40%) higher completion rates.

We got it right.

But the real insights came from digging into why.

The Metrics Breakdown

Metric | Original (B) | The Strong (A) | The Subtle (C) |

|---|---|---|---|

General Score | 24 | 42 ⭐ | 38 |

Attention Degree | 0.29 | 0.26 | 0.31 |

Emotional Response | 0.58 | 0.57 | 0.58 |

Cognitive Load | 0.38 | 0.39 | 0.37 |

Engagement (Survey) | 4.1/5 | 4.1/5 | 4.1/5 |

Brand Recall | 100% | 100% | 100% |

Shareability | 80% | 82% ⭐ | 63% |

Recommendation | 100% | 100% | 75% |

Several patterns jumped out:

1. Branding-First didn't scare viewers away

Engagement was identical across all three approaches (4.1/5)

100% of viewers would recommend the brand

The myth that "early branding kills engagement" was definitively busted

2. Storytelling-First didn't hurt brand recall

All three videos achieved 100% brand recall

Even "Original," which delayed the brand reveal, was perfectly remembered

You don't have to sacrifice brand recall for storytelling

3. The Balanced approach didn't win

"The Subtle" had the highest attention (0.31) and lowest cognitive load (0.37)

But it scored lowest on shareability (63%) and recommendation (75%)

Playing it safe diluted impact—viewers found it forgettable

4. Branding-First had a hidden advantage: shareability

"The Strong" had the highest shareability (82%)

Clear branding made it easier to recommend: "Have you seen the new Smart ad?"

Vague or subtle branding makes content harder to share

What Made "The Strong" Win?

Looking at the qualitative feedback, a clear pattern emerged. Participants remembered specific moments:

"When the friend shared data to him to undo the liked post"

"When the guy accidentally liked his ex's photo"

"When the friend showed up and shared data with his friend who is in need"

The story was relatable (we've all accidentally liked an ex's photo). The product benefit was clear (Smart helps friends help friends). And the branding was unmistakable throughout.

"The Strong" didn't win despite the early branding. It won because of it. The branding provided context that made the story more memorable and shareable.

The Statistical Rigor

Some might question whether 33 participants is enough. Fair question.

We calculated a Cohen's d effect size of 1.90—that's considered a large effect. The difference between approaches was so pronounced that even a small sample could detect it reliably.

For comparison, most marketing studies aim for a Cohen's d of 0.5 (medium effect). We were nearly 4x above that threshold.

Translation: The signal was loud enough that we didn't need a massive sample to hear it.

That said, we designed for 171 participants (57 per video) to achieve full statistical power. The 33-participant pilot was sufficient for directional validation, and we're expanding the study for peer-reviewed publication.

But here's the key insight: £600 and 33 people were enough to match Google's findings. You don't need a six-figure budget to get valid insights.

Practical Takeaways: How to Use This Framework

So what should you do with this information?

When to Use Each Approach

Use Branding-First when:

✅ You have strong brand recognition (viewers know your logo instantly)

✅ You're in a competitive category (need to stand out immediately)

✅ Your product is the hero (the thing itself is interesting)

✅ You need to drive brand lift metrics

Examples: Coca-Cola launching a new flavor, Nike releasing a new shoe, Apple announcing a new product.

Use Storytelling-First when:

✅ You're a new or challenger brand (viewers don't know you yet)

✅ You have an emotional story that stands alone (curiosity is your hook)

✅ Your audience has ad fatigue (they skip anything that looks like an ad)

✅ You need to drive engagement and social sharing above brand recall

Examples: Startup launch videos, cause marketing campaigns, challenger brand breakthrough.

Use Balanced when:

⚠️ You're unsure which will work (hedge your bets)

⚠️ You have stakeholder disagreement (compromise position)

⚠️ You're testing for the first time (reduce risk)

But know this: Balanced is the "safe" choice, not necessarily the best choice. Our data showed it scored lowest on shareability and recommendation. Sometimes playing it safe means being forgettable.

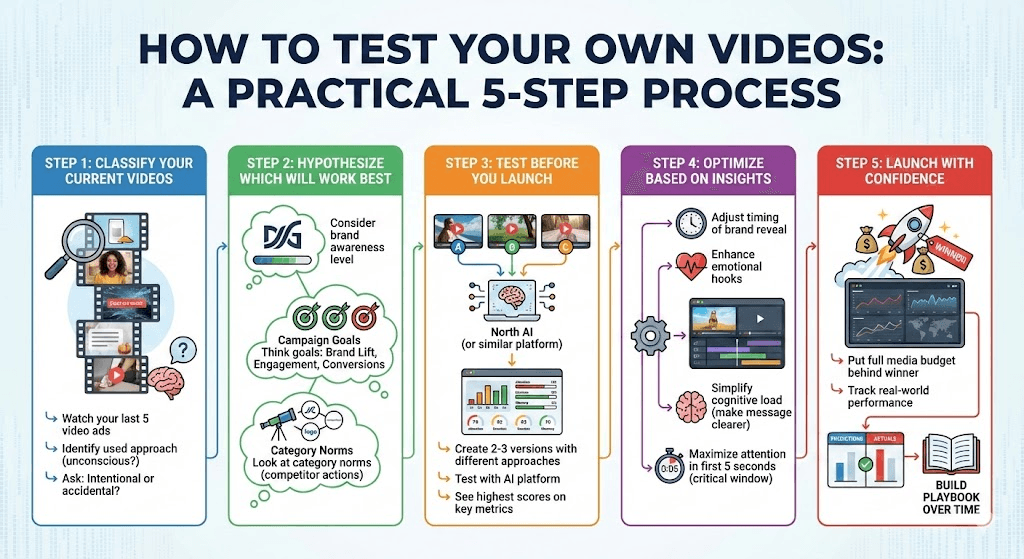

How to Test Your Own Videos

Here's a practical process:

Step 1: Classify your current videos

Go watch your last 5 video ads

Identify which approach you've been using (probably unconsciously)

Ask: Are we doing this intentionally or by accident?

Step 2: Hypothesis which will work best

Consider your brand awareness level

Think about your campaign goals (brand lift vs engagement vs conversions)

Look at category norms (what do competitors do?)

Step 3: Test before you launch

Create 2-3 versions with different approaches

Test with North AI (or similar platform)

See which scores highest on the metrics that matter to you

Step 4: Optimize based on insights

Adjust timing of brand reveal

Enhance emotional hooks

Simplify cognitive load (make your message clearer)

Maximize attention in first 5 seconds (that's the critical window)

Step 5: Launch with confidence

Put your full media budget behind the winner

Track real-world performance

Compare predictions to actuals (this builds your playbook over time)

What This Means for the Future of Creative Testing

Our study proves three things:

1. Neuroscience testing works—and it's accessible

You don't need a six-figure budget and a university research lab. Browser-based testing with small samples can deliver valid, actionable insights. We spent £600 and matched findings from a full Google Brand Lift Study.

2. Creative decisions can be data-driven

The debate over Branding vs Storytelling doesn't have to come down to opinions and politics. You can test, measure, and predict. This levels the playing field: Junior creatives can validate ideas against senior executives' gut instincts with data.

3. Conventional wisdom doesn't always hold

We proved two widely-held beliefs wrong:

"Early branding scares viewers away" → False. Engagement was identical.

"Balanced approach is always best" → False. It scored lowest on shareability.

The only way to know what works for your brand is to test.

Stop Guessing. Start Testing.

Every day, brands invest millions in video creative without knowing if it will work. They debate. They workshop. They compromise. And then they launch and hope for the best.

There's a better way.

At North AI, we believe creative decisions should be data-driven, not gut-driven. We've built a platform that makes neuroscience testing affordable, fast, and actionable—so every brand can predict performance before they launch.

Our ABC Testing study proved it works. We correctly predicted which creative approach would win, with a small sample and a tiny budget. And now you can do the same.

Ready to Test Your Creative?

Download the full whitepaper for a deep-dive into our methodology, statistical analysis, and detailed results.

Book a demo to see how North AI works for your videos. We'll show you exactly how we capture attention, emotion, and cognitive load—and what it means for your creative strategy.

Start a pilot to test 3 of your videos and get actionable insights in days. See which approach—Branding-First, Storytelling-First, or Balanced—works best for your brand.

Visit north-ai.com or connect with me on LinkedIn for more insights on neuroscience-backed creative testing.

About the Author:

Lucas Cazelli is Chief Product Officer at North AI, where he leads product strategy, validation research, and customer partnerships. He specializes in translating neuroscience into actionable business insights for brands and agencies. Previously, he's worked with major brands on creative optimization and performance marketing.

Related Resources:

[Download the Full Whitepaper] → Deep-dive methodology and results

[See How North AI Works] → Platform demo video

[Read More Case Studies] → Other validation studies

[Follow on LinkedIn] → Lucas Cazelli's insights on creative effectiveness

Tags: #VideoAdvertising #CreativeTesting #Neuroscience #MarketingROI #BrandStrategy #AdTech #NorthAI #CreativeEffectiveness #DataDrivenMarketing

Lucas Cazelli

CPO at North AI

Lucas Cazelli is Head of Product at North AI, leading product strategy and validation for the company’s AI-powered video intelligence platform. His background in computational simulation and risk assessment across large datasets in R&D product development shapes North AI’s predictive modelling, turning raw attention data into reliable performance forecasts. He is based in London, UK.

Read More Articles